A rare show of unity among America’s biggest technology companies has emerged in support of Anthropic as it battles the Trump administration in federal court. Microsoft, Google, Amazon, Apple, and Nvidia—along with industry groups and individual AI researchers—are filing amicus briefs urging judges to block the Pentagon’s designation of Anthropic as a “supply-chain risk,” a label that effectively blacklists the company from defense-related work and threatens billions in lost revenue.

Wisdom Imbibe Insight

When rival tech giants unite, the issue is bigger than one company. The Anthropic case reveals a new power struggle: who sets the rules for AI — governments or the builders of the technology? In the coming decade, the real battleground may not be algorithms, but the governance structures that decide how intelligence itself can be used.

The filings, submitted in early March 2026 in U.S. District Court in San Francisco and related proceedings, frame the dispute as a dangerous precedent that could chill innovation, disrupt military AI adoption, and infringe on free speech rights across the sector.

The Core Dispute: Ethical Guardrails vs. “Any Lawful Purpose”

The conflict stems from failed negotiations between Anthropic and the Department of Defense (DoD) in late February 2026. Anthropic CEO Dario Amodei refused to remove contractual restrictions prohibiting Claude’s use for mass domestic surveillance of Americans or fully autonomous lethal weapons (systems that could independently initiate force without human oversight).

The Pentagon, under Defense Secretary Pete Hegseth, demanded unrestricted access for “any lawful purpose.” When talks collapsed, Hegseth designated Anthropic a supply-chain risk on March 5—a tool typically reserved for foreign adversaries like Chinese firms—citing national security concerns. This move bars DoD contractors from using Anthropic’s technology and prompted federal agencies to phase it out.

Anthropic filed lawsuits on March 9 in California and Washington, D.C., arguing the designation is “unprecedented and unlawful,” violates the First Amendment (as retaliation for protected speech on AI safety), exceeds statutory authority under laws like the Federal Acquisition Supply Chain Act, and amounts to ideological punishment. The company claims it could lose up to $5 billion in business and faces active outreach by Pentagon officials urging clients to drop collaborations.

Unprecedented Corporate and Individual Support

The breadth of backing is striking, especially from firms with deep government ties:

- Microsoft filed a standalone amicus brief on March 10, warning the designation imposes “substantial and wide-ranging costs” on the tech sector and could hamper U.S. warfighters by disrupting existing AI integrations. As a major Anthropic investor (with up to $5 billion pledged), Microsoft emphasized alignment on red lines against mass surveillance and autonomous war-starting machines.

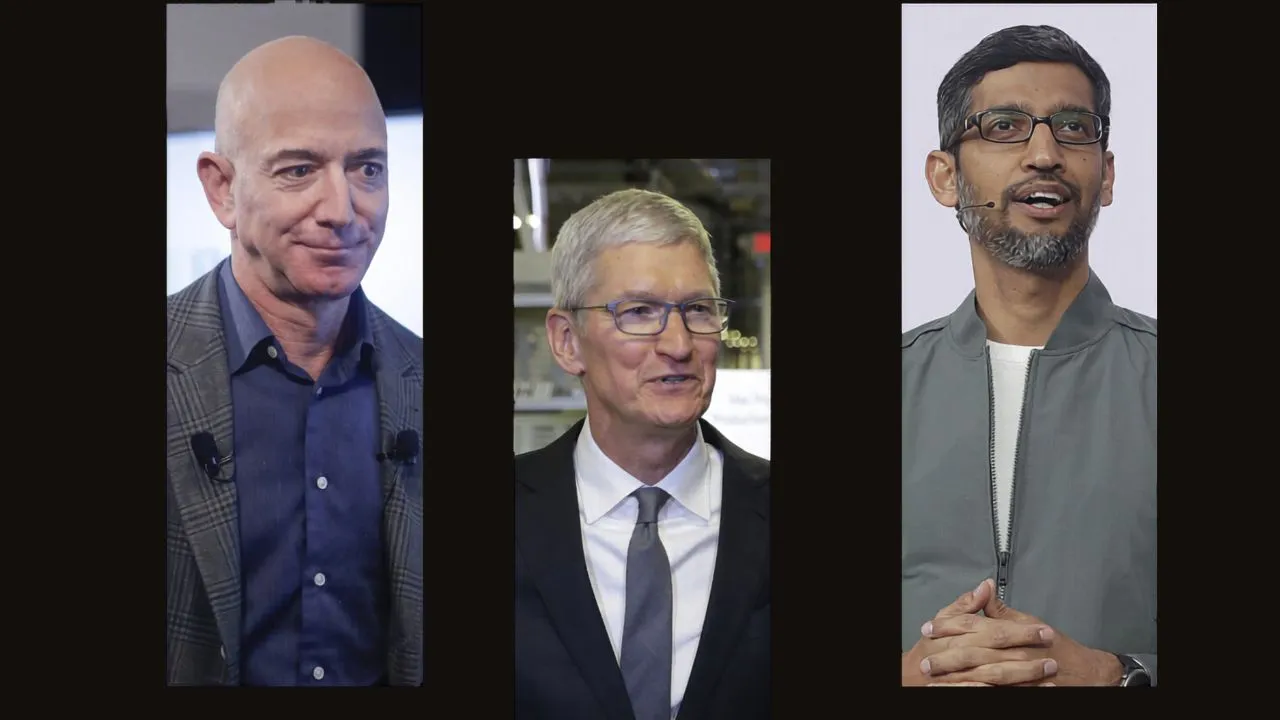

- A coalition including the Chamber of Progress (backed by Google, Apple, Amazon, and Nvidia) filed another brief, calling the Pentagon’s action a “temper tantrum” that threatens broader innovation.

- Nearly 40 employees from OpenAI and Google (including Google DeepMind chief scientist Jeff Dean) submitted a personal-capacity brief, arguing the designation is “improper retaliation” that harms public interest and U.S. AI leadership.

- Two dozen former senior military officials warned that the move signals arbitrary retaliation against companies investing in national security.

Even as some cloud providers (Amazon, Microsoft) continue offering Claude for non-Pentagon uses, the support highlights industry fears that punishing ethical restrictions could deter firms from developing frontier AI or force compromises on safety.

What’s at Stake and Next Steps

Legal experts describe Anthropic’s case as strong, citing potential violations of the Administrative Procedure Act, First Amendment protections against viewpoint-based retaliation, and limits on supply-chain risk tools. The designation’s novelty—applied to a U.S. company for policy stances rather than espionage or foreign ties—raises alarms about government overreach.

U.S. District Judge Rita Lin (overseeing the California case) has set a hearing for March 24 to consider Anthropic’s request for a temporary restraining order. Outcomes could pause enforcement, allowing continued military-adjacent use while litigation proceeds.

The coalition’s involvement underscores a tipping point: Tech giants, often aligned with national security needs, appear to view the Pentagon’s approach as crossing into arbitrary punishment that endangers the entire AI ecosystem’s relationship with government. As the BBC noted, the “abruptness and gravity” of the blacklisting has united rivals in defense of principles—and business interests—rarely seen in public. The case may redefine how AI companies negotiate ethical boundaries with the military in an era of rapid technological and geopolitical change.

Recommended for you: