In the current technological landscape, a massive shift is approaching that most of society has yet to fully acknowledge. Dario Amodei, the co-founder and CEO of Anthropic, describes this moment with a haunting metaphor: a tsunami is visible on the horizon, yet observers on the shore are desperately coming up with explanations for why the wave isn’t real. As Amodei notes, the common refrain is that the impending shift is “just a trick of the light.”

Wisdom Imbibe Insight

The AI tsunami isn’t about machines replacing humans — it’s about humans redefining their value. When intelligence becomes manufactured, judgment becomes priceless. The winners won’t be the fastest coders, but the clearest thinkers. In an age of synthetic abundance, critical reasoning, ethical leadership, and adaptability become the ultimate wealth engines. The future belongs to prepared minds.

While AI has become increasingly personal—surprising users with its ability to anticipate their thoughts and assist in daily tasks—there remains a profound gap between the technology’s actual trajectory and public awareness of its implications. To navigate this “Adolescence of Technology,” we must look past the mirage and confront the fundamental principles driving this shift.

Table of Contents

1. Intelligence is a “Chemical Reaction” of Three Ingredients

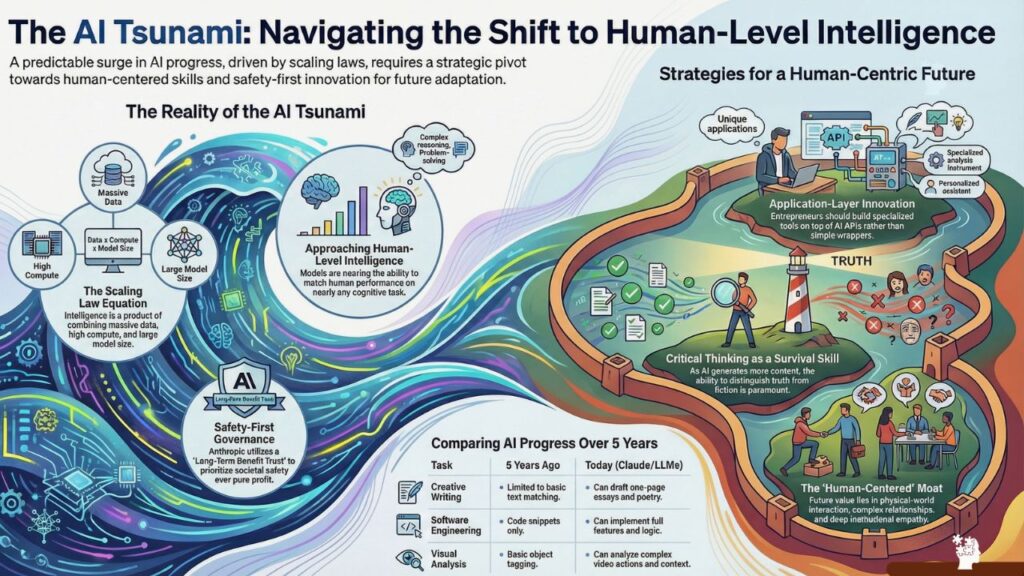

One of the most foundational concepts in modern AI is the “scaling law,” a principle Amodei championed early on. He explains the emergence of intelligence not as a mysterious fluke, but as the predictable result of a manufactured formula.

- The Three Ingredients: Just as a chemical reaction requires specific elements in the right proportions to produce a product, AI intelligence is the product of three ingredients: data, compute, and model size.

- A Shift in Capability: Five years ago, computing was largely about “searching text”—matching queries to existing information on the web. Today, AI has moved toward “thinking for itself.” It handles hypotheticals and writes original code in ways that were impossible half a decade ago.

- The “Manufactured” Mind: The idea that intelligence can be effectively manufactured simply by increasing these three inputs is highly counter-intuitive. It challenges the long-held biological “monopoly” on cognition, suggesting that what we once considered a sacred, mystical spark is actually an emergent property of scale.

“Intelligence is the product of a chemical reaction… if you put in the ingredients of data and model size, what you get out is intelligence.” — Dario Amodei

2. The “Internal MRI” of the AI Brain

For years, deep learning models were treated as “black boxes.” Amodei describes the old way of building AI as similar to watching a snowflake form—we just trained them in an emergent way and watched them grow. Anthropic is now moving past this through the science of Interpretability.

This field acts as a sort of “internal MRI” for neural networks. Rather than guessing why a model provides a certain answer, researchers are now identifying specific “neurons” and circuits. For instance, they have found distinct clusters responsible for understanding rhymes in poetry.

Understanding the “why” behind AI decisions is more than a technical curiosity; it is a critical safety win. By seeing inside the “brain” of the AI, developers can ensure the system is operating on the correct principles. We are moving from being passive observers of the “snowflake” to active architects who understand the internal geometry of the machine.

3. Power Concentration vs. The Long-Term Benefit Trust

Amodei expresses an acute “discomfort” with the concentration of power that has occurred in the AI industry almost overnight. There is a certain randomness to how a few individuals ended up leading companies that will soon power massive portions of the global economy.

- The Long-Term Benefit Trust (LTBT): To counter this, Anthropic implemented the LTBT—a body of financially disinterested individuals who ultimately appoint the majority of the board. It is a specific mechanism to ensure that no single person’s ego or profit motive dictates the path of the technology.

- The Strategy of Self-Regulation: Anthropic often advocates for government regulation—like California’s SB53—that primarily targets itself and its largest competitors. By supporting transparency laws for companies with over $500 million in revenue, Anthropic is attempting to “steer the car” away from disaster before the industry reaches full maturity.

“We’re steering this car. We’re steering it towards a good place, but also there are trees, there are potholes. And so what we need to do is we need to steer away from the trees and the potholes. We might need to occasionally slow down a bit… in order to make sure that we steer in the right direction.”

4. The End of “Coding” and the Shift in Human Value

The rise of AI has sparked significant anxiety in global IT hubs like India. Amodei offers a nuanced perspective on the future of work, distinguishing between the technical “act” and the broader “objective.”

In his meetings with major Indian IT conglomerates, Amodei views India not just as a consumer market, but as a critical partner in “systems integration.” These companies possess a “moat” of local institutional knowledge that AI cannot easily replicate. While Claude Code might automate the syntax of a program, the human engineer still acts as the architect, and tools like Cowork are designed to help non-technical users bridge the gap.

- Amdahl’s Law and the Radiologist: Amodei points to radiology to illustrate a shift in value. AI has become better than humans at reading medical scans, but the number of radiologists hasn’t plummeted. Instead, the “most technical” part of the job was automated, making the un-automated part—human-centered communication and walking the patient through the scan—the most valuable limiting factor.

- Managing AI Teams: The future professional will likely move from being an individual contributor to “managing teams of AI models.”

- Critical Thinking as Survival: In a world flooded with synthetic “fakes,” basic critical thinking and “street smarts” become the ultimate survival skills. The ability to apply first-principles reasoning to distinguish fact from fiction will be more valuable than any technical proficiency.

5. The “Too Weird to Be True” Fallacy

One of the greatest barriers to preparing for the AI tsunami is psychological. Amodei identifies a common human tendency to dismiss major shifts because they seem “too weird” or “too crazy” to actually happen.

Most people fail to see the future even when the data is public because they refuse to follow empirical curves to their logical conclusions. Amodei suggests that you can “predict the future for free” by combining empirical observation (the scaling curves) with first-principles reasoning. Resisting this reality because it feels like science fiction only leaves society less prepared. He warns against reasoning from “pure logic” alone; one must look at what the machines are actually doing today and extrapolate.

Conclusion: Curing Diseases and the Choice Ahead

We are currently navigating the “Adolescence of Technology”—a period of high risk where the technology is powerful but not yet fully governed. Yet, on the other side of this risk is a vision Amodei calls “Machines of Loving Grace.”

This positive vision includes a biotech renaissance, where AI helps design programmable peptide therapies and genetically engineered cell-based treatments to attack cancer. The design space for these digital-like biological therapies is vast, and AI is the key to unlocking it.

As we export cognition to machines, we face a fundamental choice: Will we use this newfound freedom to enrich ourselves intellectually, or will we let our cognitive muscles atrophy and become “stupider” as a race? The technology is a lever; the direction it moves is still a human choice.

Recommended for you:

- It Takes a Lot of Energy to Train a Human Too”: Altman’s Controversial Take on AI Power Use – And the Indian Billionaire Who Just Shut It Down

- The Efficiency Trap: Is Our Growing Dependency on AI Costing Us Our Intelligence?

- “I Had to Run Like I Was Defusing a Bomb”: Meta’s AI Safety Director’s Nightmare With OpenClaw